Most people do not struggle because they lack musical ideas. They struggle because ideas arrive in fragments. A phrase appears before breakfast. A hook shows up in the middle of work. A mood is clear, but the arrangement is not. For years, that gap between intention and execution kept many creators from going further. That is why I wanted to test AI Music Generator in a practical way, not as a novelty, but as a working tool. The question was simple: can it help turn scattered ideas into usable music without demanding studio-level skill?

That question matters more than the usual “can AI make a song” discussion. By now, many tools can produce something that sounds like a song. The harder question is whether the process feels productive. A useful platform should not only surprise the user once. It should help them move from rough intent to repeatable output. It should also make revision less painful than starting over from scratch.

So I approached ToMusic the same way an ordinary creator might. I did not treat it like a technical benchmark. I treated it like a tool that had to earn its place in a creative workflow. I wanted to see whether it handled vague prompts, whether it responded better to specific direction, whether lyrics changed the experience, and whether the results felt usable enough for content, demos, or early-stage song development.

What I found is that ToMusic works best when it is understood as a bridge. It is not magic, and it does not remove creative judgment. But in my testing, it lowered the cost of exploration. That is not a small benefit. In real creative work, lowering the cost of exploration is often what makes progress possible.

Why I Tested It Like a Working Creator

There are many ways to review a music platform. One is to focus on promotional claims. Another is to chase a perfect demo. I chose a third route: test it under the kinds of conditions that actual users face.

The Goal Was Practical Usefulness

I was less interested in whether one output sounded impressive in isolation and more interested in whether the platform could support decision-making. Could it help someone compare moods? Could it help a lyric sheet become more concrete? Could it produce results that felt close enough to keep refining?

A Good Demo Is Not the Same as a Good Workflow

This distinction matters. A short clip can sound striking and still be hard to reproduce. For most users, the more meaningful test is whether they can move from one idea to several plausible versions without losing direction.

Ordinary Creators Need Momentum More Than Perfection

A solo creator, marketer, or songwriter often does not need final-release polish on the first pass. They need a draft that reveals where the idea could go. In my observation, ToMusic is strongest when judged by that standard.

The Real Test Was Friction

Every creative tool creates some kind of friction. The question is where that friction appears. With traditional music software, it often appears early in the process because the tools are powerful but demanding. With some generative systems, it appears later because the first result is easy but steering the second result is confusing.

ToMusic appears designed to reduce that problem by giving users more than one way to begin. That was one of the first things I wanted to test closely.

How I Structured the ToMusic Test

I did not use only one prompt and call it a review. I broke the process into several situations that reflect common creator behavior.

Scenario One Was Mood-Based Prompting

In the first scenario, I approached the platform without lyrics. I used simple descriptive intent: genre, atmosphere, pace, and emotional tone. This is the likely entry point for many beginners.

Scenario Two Was Lyric-Led Song Drafting

In the second scenario, I started with text. This matters because many people do not begin with production language. They begin with lines, phrases, or unfinished song sections. I wanted to see whether the system could interpret words in a way that felt musically coherent.

Scenario Three Was Repeat Generation

In the third scenario, I tested whether the same idea could produce multiple usable variations. This was important because one of the hidden weaknesses of some AI music tools is that they feel exciting once but unreliable over time.

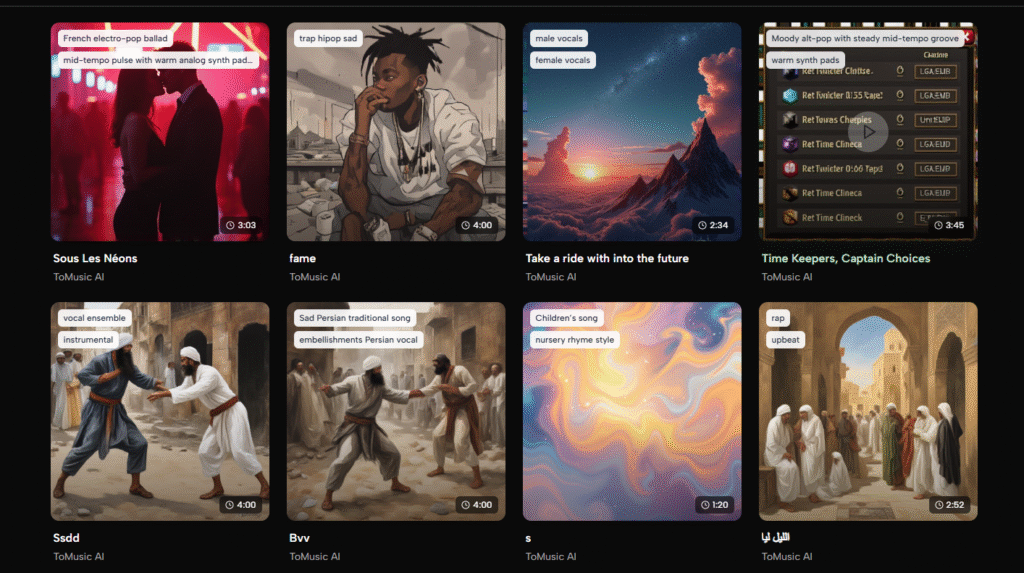

Scenario Four Was Asset Management

Finally, I paid attention to what happened after generation. Publicly, ToMusic includes a music library that stores outputs with titles, tags, descriptions, lyrics, and parameters. I wanted to see whether this part of the workflow actually mattered.

The First Impressions Were About Accessibility

The first strength I noticed was that the product framing feels straightforward. It does not assume the user is already a producer.

Starting Feels Manageable

Prompt-led entry lowers the emotional barrier. A user does not have to think in terms of signal chains, plugins, or arrangement software. They can begin with what they want the music to feel like.

This Helps Non-Musicians Immediately

That may sound obvious, but it changes the relationship between the user and the tool. Instead of asking, “Do I know enough to begin?” the user asks, “What do I want this track to express?”

That Change Is More Important Than It Sounds

Creative momentum often depends on starting before doubt grows too large. A platform that accepts natural-language intent gives users a better chance of acting while the idea is still alive.

The Interface Logic Supports a Faster First Draft

In my testing, the main advantage was not extreme detail control at the start. It was the ability to get to a first draft quickly enough that the idea stayed emotionally intact. For early-stage creativity, that is often the correct trade-off.

What Happened When I Used Simple Prompts

The first round of tests focused on broad musical direction. I avoided overloading the system with long instructions. Instead, I used prompts that described style and mood in normal language.

Broad Prompts Produced Broad Results

This was not surprising. When the direction was general, the outputs were often listenable but not sharply distinctive. They usually captured the emotional neighborhood, but not always the exact personality I imagined.

This Is a Useful Limitation to Understand

It would be easy to call this a flaw, but I think it is more accurate to call it a rule of the medium. Generative systems interpret direction. If the direction is loose, the result is often correspondingly broad.

The Fix Was Better Specificity

When I clarified mood, pacing, energy level, and instrument character, the results became more coherent. In practice, that means users benefit from treating prompts like creative briefs rather than casual guesses.

The Platform Responded Best to Directed Mood

The stronger outputs usually came when emotional tone and use case were both clear. Saying “sad piano song” is one thing. Saying “slow reflective piano-led song for a personal montage with warm but restrained emotion” tends to create a more useful frame.

That pattern is not unique to ToMusic, but the platform handled this kind of refinement in a way that felt approachable rather than technical.

Testing Lyrics Changed the Experience

The next part of the review involved lyric-led creation, and this is where the platform became more interesting.

Words Made the Tool Feel More Like Songwriting

A lot of AI music platforms can generate mood effectively. Fewer feel equally comfortable when the user begins from written lines. In my testing, ToMusic became much more compelling when I shifted from prompt-only requests to lyric-based direction.

Lyrics Add Structure Before Music Appears

When a user brings words, they are also bringing rhythm, emotional framing, and narrative hints. That gives the system more to interpret than genre labels alone.

This Makes the Output Easier to Judge

With lyric-led tests, it was easier to decide whether a result worked. The question was no longer only “Does this sound good?” It became “Does this serve the words?”

The Results Were Not Perfect, But They Were Useful

Some generations felt stronger than others, and that is worth saying plainly. Not every output felt like a finished song. But many felt like credible drafts. That matters because a credible draft is often enough to continue working.

Later in the process, I found myself thinking less about whether the system replaced traditional songwriting and more about whether it accelerated creative discovery. In that sense, Text to Music feels genuinely practical. It gives written language a faster path into audible form.

The Multi-Model Structure Matters More Than It First Appears

Publicly, ToMusic presents several models with different strengths. I think this is one of its most important product decisions.

Different Models Encourage Better Creative Hypotheses

When a platform treats every request as if one generation engine can solve everything, users often compensate by endlessly rewriting prompts. A multi-model structure changes that behavior. It invites the user to ask which kind of interpretation might fit the idea best.

That Reduces Blind Experimentation

Instead of treating every failure as a prompt problem, the user can also think in terms of model fit. That is a healthier workflow because it turns variation into a structured choice.

It Also Improves Confidence

In my observation, users stay calmer when they know there is more than one valid route to a result. This matters because creative frustration often comes from feeling trapped in one unclear system.

Model Choice Makes Repetition More Valuable

Repeat generation became more interesting once I thought of the models not as technical labels but as alternate creative perspectives. The same idea could be tested under different assumptions, which made the platform feel less random and more like a workspace.

What the Music Library Adds to the Workflow

This part may sound less exciting than generation, but it turned out to be important.

Saved Outputs Make Comparison Real

Publicly, the platform’s library stores songs with titles, tags, descriptions, lyrics, and parameters. In practice, this gives users a way to treat outputs as part of an ongoing process instead of disposable moments.

Creative Work Needs Memory

A common weakness in generative tools is that they produce many options but make it hard to remember what mattered about each one. When a system preserves context, comparison becomes easier.

That Encourages Iteration Instead of Chaos

People are more likely to refine ideas when previous attempts remain visible and organized. Without that, experimentation can quickly feel messy and discouraging.

This Helps More Than Power Users

At first glance, a library may seem like an advanced feature. I do not think it is. Beginners may benefit from it even more because it reduces the anxiety of losing progress.

Where ToMusic Felt Strongest

After several rounds of testing, a few strengths became clear.

It Lowers the Threshold for Beginning

The platform is approachable enough that non-musicians can start without much intimidation. That is a major advantage.

It Handles Both Prompt and Lyric Workflows

This gives it a wider audience than tools that feel optimized for only one input mode.

It Rewards Better Direction

In my testing, the platform did not punish specificity. On the contrary, it made better use of it. That is a healthy sign because it means creative skill still matters.

It Makes Reuse More Plausible

With multiple models and stored outputs, the platform feels suited to repeated experiments rather than one-off novelty use.

Where the Limitations Became Visible

A credible test should also describe where the platform does not solve everything.

The First Output Was Not Always the Best

This is probably the most important expectation to set. Useful results often appeared after more than one try. That is normal in generative systems, but users should know it upfront.

Revision Is Still Part of the Process

The platform accelerates drafting, but it does not remove the need to choose, compare, and sometimes regenerate.

Patience Improves the Experience

Users who treat the first result as a rough reveal rather than a final answer are likely to get more value.

Prompt Quality Still Shapes Outcome Quality

Loose direction usually produced looser music. Stronger inputs created stronger drafts. In other words, the platform expands access, but it does not eliminate the benefit of thoughtful intent.

Who I Think Will Benefit Most

The strongest users for ToMusic are not only musicians.

Songwriters With Unfinished Material

Someone with lyrics, hooks, or fragments can use the platform to test emotional direction quickly.

Content Creators and Small Teams

A creator making ads, explainers, reels, or short-form pieces can explore original music ideas without building a full production pipeline.

Curious Beginners

People who have always wanted to make music but never crossed the technical barrier now have a more approachable place to begin.

My Final View After Testing ToMusic

After spending time with ToMusic as a working platform rather than a demo, I think the most accurate description is this: it is a useful creative bridge. It does not replace musical judgment, and it does not eliminate iteration. But it meaningfully reduces the distance between concept and draft.

That matters because most creative projects do not fail from lack of ideas. They fail from lack of momentum. In my testing, ToMusic helped preserve momentum. It made it easier to move from feeling to sound, from words to structure, and from first attempt to revision. For a platform in this category, that is a serious achievement. The real value is not that it can generate music. The real value is that it makes creative exploration easier to continue.